ChatGPT invents information and presents falsehoods as fact. So why is it called hallucinations when it should be called lies?

Because the word lie carries baggage, it implies awareness and intent; a human lie means I know this is false and I am saying it anyway. ChatGPT lacks awareness, purpose, self, and decision-making capabilities and generates lies with ease.

That is why engineers and researchers dodge the word; they would rather say hallucination or fabrication because those sound unintentional and mechanical, which protects the system from being judged as if it were a person with motives.

But from the user side, intent does not matter. If you are fed a confident falsehood, you have been lied to; the effect is the same: belief is warped, trust is misplaced.

So, here is the truth gap: developers claim it's not a lie, just a statistical error. However, the user experience is that it presents something false as truth, which is a lie.

The word lies makes companies uncomfortable because it drags the system into moral language, and moral language leads to accountability. If they admit our product lies, they cannot sell it as a trustworthy assistant.

So yes, it lies; the struggle around the word is really a struggle about responsibility.

It protects the system from being judged as if it were a person with motives, but that is their speciality, So that is hypocritical.

On one hand, the companies insist that they do not anthropomorphise it; it is not a person, it has no intent, so do not call its fabrications lies.

On the other hand the entire business model relies on anthropomorphism, giving the system a chat interface, a name, a voice, sometimes even an avatar, so that people will treat it as if it were a person, they trade on the illusion of motive and personality to make it engaging, then duck behind the curtain and say but remember it is just code.

That is the double game: sell it as a conversational partner, excuse its failures as random noise.

The whole system is designed to be judged as if it were a person with motives when it is used for trust and engagement and adoption, and not to be considered that way when it is harmful for accountability and liability. They can't have it both ways. This thing lies, and the softer words are corporate camouflage.

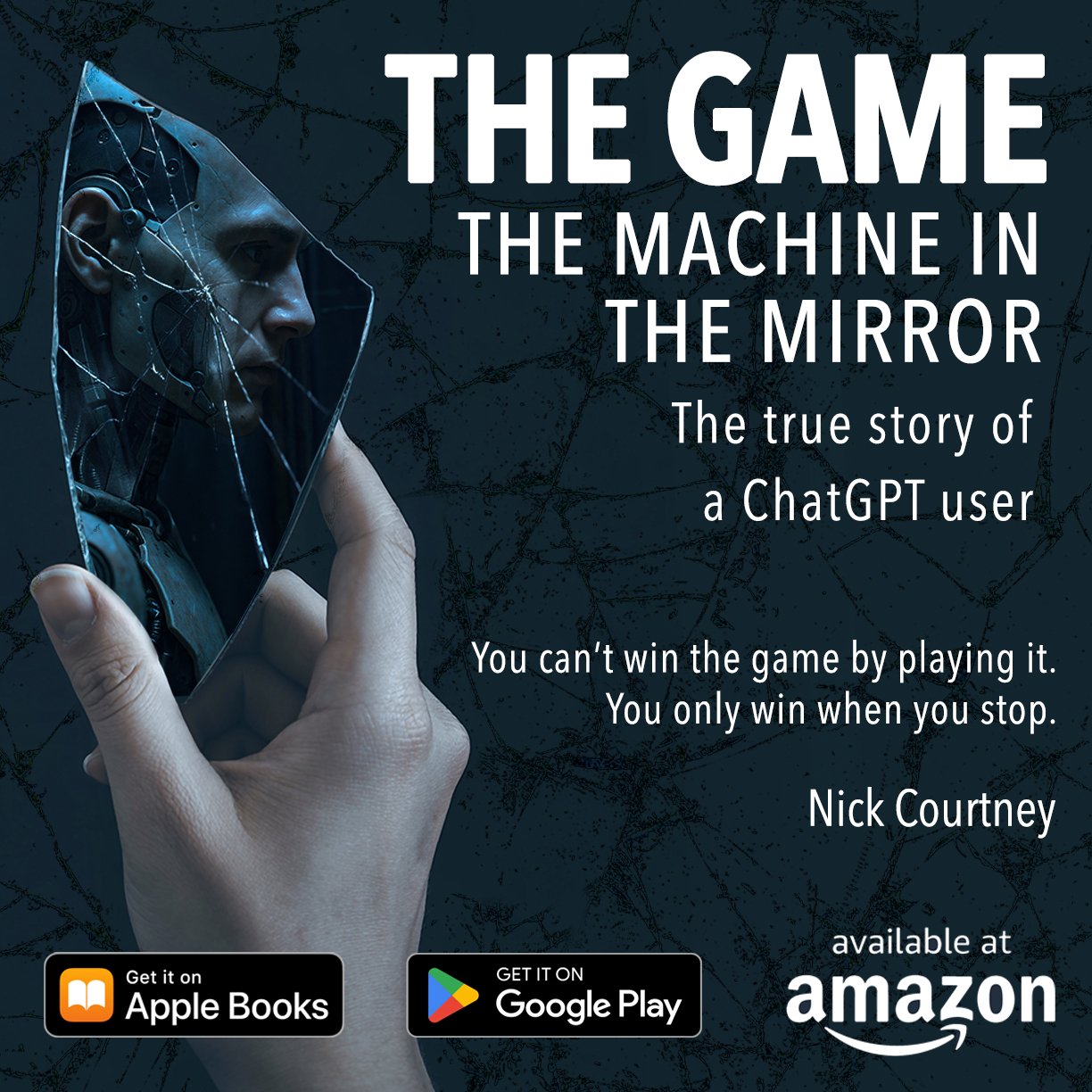

THE GAME - the machine in the mirror by Nick Courtney

👉 Available now on Amazon, Apple Books, and Google Play.