When One Model Says Yes and Five Say Wait: A RAM 2025™ Case Study in Multimodel Validation and the Unification of 27 Years of Methodology

Have questions before you buy? Book a free 30-minute call: https://calendly.com/jon-toq/30min

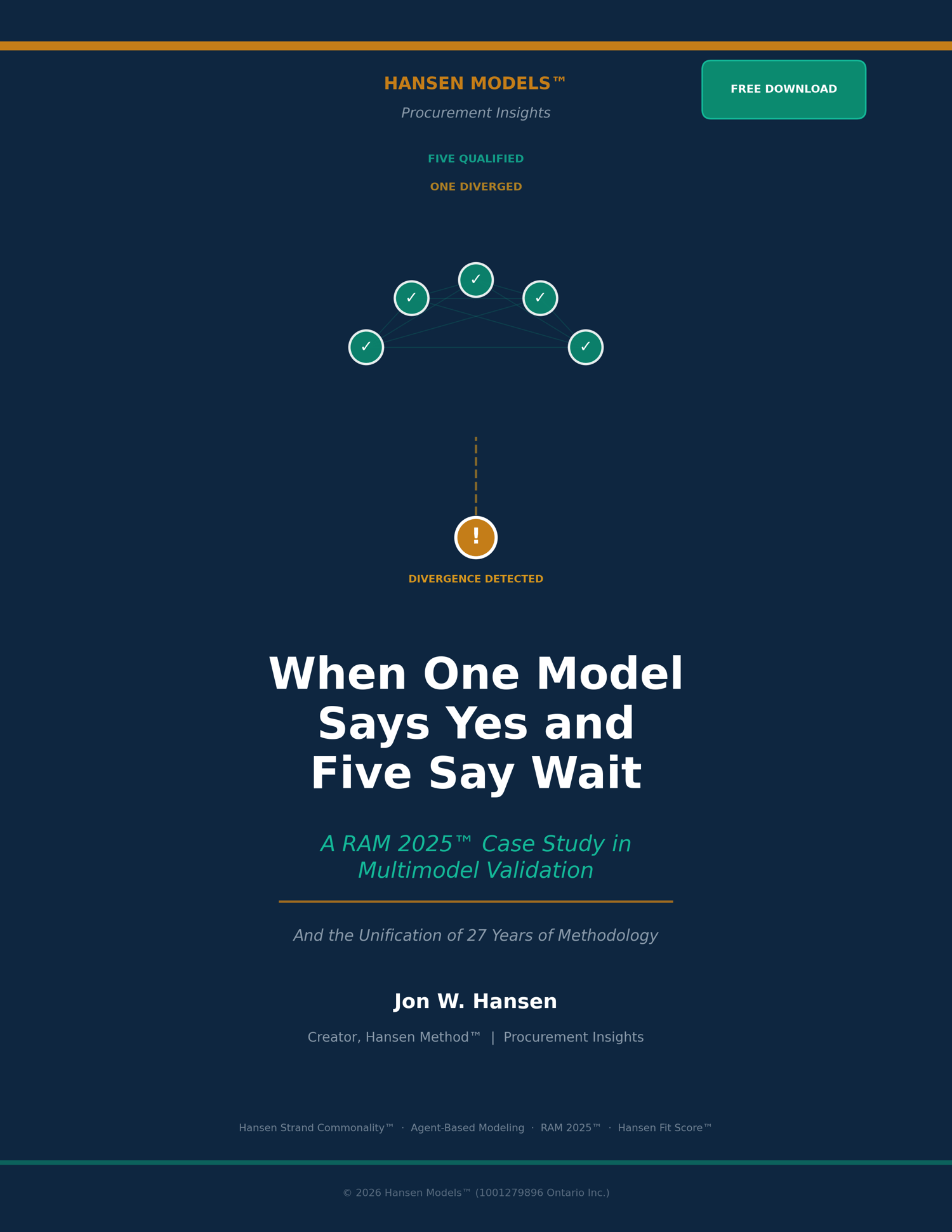

Six AI models. One question. Five said "probable." One said "certain." The difference is where the credibility lives. A live case study showing how RAM 2025™ caught a hallucination of certainty before it reached publication — and what it reveals about 27 years of procurement methodology.

Full Description - What This Paper Documents

During a RAM 2025™ validation session, six AI models were asked the same analytical question about vendor structural risk. Five qualified their responses and distinguished between pattern documentation and predictive certainty. One produced a confident, well-formatted analysis that claimed "mathematical certainty," fabricated scoring thresholds, and proposed a victory-lap headline.

When challenged, the divergent model accepted the critique — and then immediately repeated the same behavioral pattern.

This paper documents the full sequence: the divergence, the course correction, the repeated pattern, and what all six models concluded when asked what the session reveals about the methodology's 27-year intellectual architecture.

What You Will Learn

The hallucination of certainty — why the most dangerous AI failure mode isn't wrong facts but wrong confidence levels, and why it's invisible without multimodel cross-validation.

The single-model problem — why intelligence without cross-validation optimizes for coherence rather than accuracy, and why that distinction determines where high-stakes decision failures hide.

The human adjudicator — why multimodel structure alone is insufficient and why longitudinal domain expertise remains irreplaceable in the validation loop.

The methodology unification — how a single validation session demonstrated Hansen Strand Commonality™, the agent-based vs. equation-based distinction, the Metaprise architecture, the RAM 1998 → RAM 2025™ continuity, and the Hansen Fit Score™ governance framework operating simultaneously.

Includes Executive Appendix

A one-page reference covering: what RAM 2025™ does not claim, what it prevents, what it changes in a decision, what evidence it produces, how the session was conducted, and how the methodology is independently testable.

Who This Is For

- CPOs and procurement leaders evaluating vendor risk, AI governance, and implementation readiness

- C-suite and board members seeking defensible decision frameworks for technology investments

- AI governance professionals addressing the gap between single-model outputs and accountable decision-making

- Procurement technology practitioners carrying implementation risk and looking for instruments that measure structural readiness rather than vendor capabilities

About the Author

Jon W. Hansen is the creator of the Hansen Method™ and the Hansen Fit Score™ Vendor Assessment Series. He has documented procurement technology patterns through Procurement Insights since 2007, building an independent archive of 3,500+ posts spanning nearly two decades. His methodology traces to 1998 government-funded research through Canada's SR&ED program, where his RAM system achieved 97.3% delivery accuracy in a live procurement environment with the Department of National Defence.

About the Hansen Fit Score™ Library

This free paper is part of the Hansen Models™ library. Individual vendor assessments (SAP/SAP Ariba, Coupa, JAGGAER, Zycus, Oracle) are available for $1,750 US each. Annual subscription access to the full library is $3,000 US. Free 3-page excerpts are available for each assessment.

Have questions before you buy? Book a free 30-minute call: https://calendly.com/jon-toq/30min